Search results for MoE

AI & Enterprise

DeepSeek V4 seen having bigger impact than R1 on strong price performance

China\'s AI company DeepSeek has launched its new V4 models, drawing attention for offering open-source, near-frontier performance at a much lower price than Opus 4.7 or GPT-5.5. The V4 Pro and V4 Flash models were trained on about 33 trillion tokens and post benchmark results close to those rivals. Commentator Matthew Berman said pricing could pull U.S. companies toward DeepSeek, though geopolitical risks remain.

AI & Enterprise

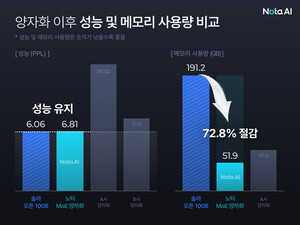

Nota tops Nvidia Nemotron hackathon overall

Nota, an AI model lightweighting and optimisation company, won the overall top prize at the Nvidia Nemotron hackathon, finishing first among 20 teams. It used synthetic data generation technology specialised for mixture-of-experts (MoE) quantisation. The event was held to share research results from Nvidia\'s open-source AI model Nemotron and improve the practical application capabilities of domestic developers, and ran in three tracks.

Industry

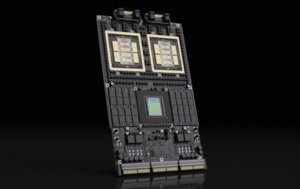

Nvidia shifts AI chip battleground from specs to end-to-end efficiency

Nvidia said it will shift competition in AI semiconductors from chip specifications to end-to-end efficiency across pre-training, post-training, inference and agents. It also disclosed measured results showing Blackwell-based GPUs deliver 55 times faster mixture-of-experts inference than the prior Hopper generation. Nvidia highlighted efficiency gains from a new numeric format and software advances, and introduced curriculum-based post-training results for its Nemotron 3 Nano model. It also said Korean partners are participating and unveiled a Korean-focused synthetic dataset.

-

AI & Enterprise

Why Google Gemma 4 is in the spotlight as an open-source AI game changer

-

AI & Enterprise

Google unveils open model Gemma 4, supports complex reasoning on low-power devices

-

AI & Enterprise

Medical, bio AI models prove global competitiveness, move to phase two development

-

AI & Enterprise

Cloud no longer needed? Ultra-large 400B-class LLM runs on iPhone in test

-

Industry

From GPUs to factory floors, Nvidia redefines manufacturing landscape

-

AI & Enterprise

Nota compresses Upstage\'s Solar 100B memory use by 72%

-

Games & Commerce

Kakao integrates AI organisation into AI Studio led by CEO Jung Shin-a

-

AI & Enterprise

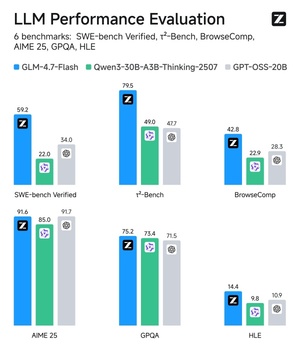

Z.ai unveils GLM-4.7-Flash, claims better performance than OpenAI GPT-OSS-20B

-

AI & Enterprise

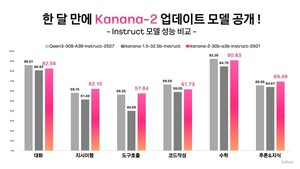

Kakao releases updated Kanana-2 open-source models

-

AI & Enterprise

SKT elite team releases A.X K1 technical report, cites math and coding performance

-

AI & Enterprise

Nvidia unveils DGX SuperPOD supercomputer for Rubin chips

-

AI & Enterprise

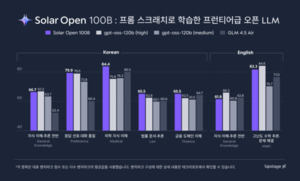

Upstage unveils in-house LLM Solar Open 100B as open source

-

Industry

Nvidia unveils next-generation AI platform Rubin