AI technology company Upstage said on Tuesday it has released its self-developed large language model (LLM) Solar Open 100B (Solar Open) as open source.

Solar Open is the first result of the Ministry of Science and ICT's "Independent AI Foundation Model Project," in which Upstage is participating as a lead company.

Upstage developed it using a "from-scratch" approach, carrying out the entire process independently from data building to training. Upstage released the Solar Open model on the global open-source platform Hugging Face and also published a technical report detailing the development process and technical specifics.

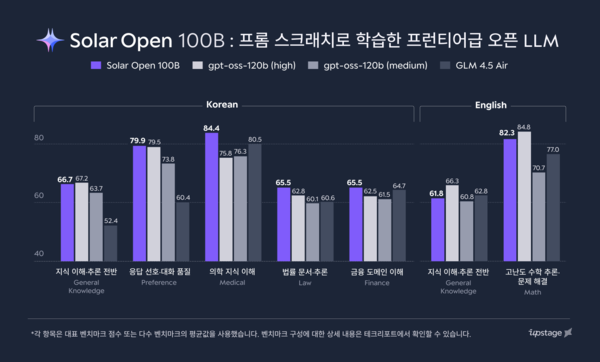

According to the company, Solar Open is a model with 102 billion parameters. It said that compared with DeepSeek R1 (DeepSeek R1-0528-671B), one of China's leading AI models, its size is only 15 percent, but it delivered better results in major benchmark evaluations in three languages: Korean (110 percent), English (103 percent) and Japanese (106 percent).

In major Korean-language benchmarks, including Korean cultural understanding (Hae-Rae v1.1) and Korean knowledge (CLIcK), it showed a performance gap of more than two times compared with DeepSeek R1. The company also stressed that Solar Open recorded 100 percent better performance than OpenAI's similarly sized model, GPT-OSS-120B-Medium.

Solar Open also secured performance on par with DeepSeek R1 in high-level knowledge areas such as mathematics, complex instruction-following and agents. It also showed comparable competitiveness against OpenAI's GPT-OSS-120B-Medium in overall knowledge and code-writing capability.

Upstage also stressed that a high-quality pretraining dataset of about 20 trillion tokens contributed to performance improvements.

To overcome a lack of Korean-language data, Upstage used various synthetic data and specialised data by sector, including finance, law and medicine, for training. It also advanced a range of data training and filtering methodologies.

Upstage will open part of the dataset through the National Information Society Agency's (NIA) AI Hub and return it as a public good to help activate the domestic AI research ecosystem. On efficiency, Upstage said Solar Open maximised efficiency through a MoE (Mixture-of-Experts) structure combining 129 expert models, activating only 12 billion parameters in actual computation.

Upstage also improved tokens per second (TPS) by about 80 percent through GPU optimisation. It developed its own reinforcement learning (RL) framework, SnapPO, cutting the training period by 50 percent. Upstage said it will work with Nota, Lablup, Flitto, the Korea Advanced Institute of Science and Technology (KAIST) and Sogang University, among others, as part of an elite team in a consortium for an independent foundation model development project, to step up development of industry-specific services with the goal of setting "a new standard of work opened by AI."

It plans to accelerate AX (AI transformation) by working with companies in each field, including Korea Financial Telecommunications and Clearings Institute (finance), Law&Company (legal), Makinarocks (defence and manufacturing), VUNO (healthcare), OKESTRO (public sector) and Day1 Company (education). It will also expand its push into global markets through Allganize (global) and Upstage's U.S. and Japan branches.

Upstage CEO Sung Hoon Kim said Solar Open is a model Upstage trained independently from the start and is "the most Korean yet also global AI" that deeply understands Korea's emotions and linguistic context. He said the release of Solar Open will be an important turning point in opening an era of Korean frontier AI.