[DigitalToday Kyung-min Hong (홍경민) intern reporter] SanDisk has released its SanDisk Pseudo Random (SP Random) algorithm as open source, moving to improve data centre operating efficiency by cutting SSD preconditioning time from about a week to about 6 hours.

According to IT outlet TechRadar on May 12, SanDisk recently distributed SP Random, its core algorithm, as an extension to the storage testing tool FIO (Flexible IO Tester), making it freely usable across the industry.

Newly purchased enterprise SSDs require a preconditioning process to stabilise performance before being put into real-world use. During this process, the controller fills data and performs complex internal management tasks such as garbage collection and wear levelling. Only after these activities reach steady state does the drive's input-output (IO) performance become consistent enough to be reliable in production environments.

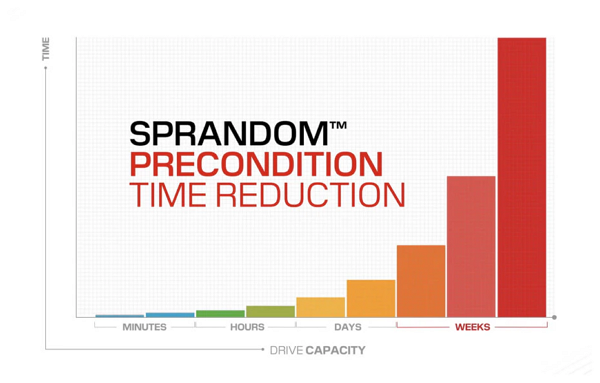

The conventional method required writing more than twice the drive's total capacity, which meant about 160 hours for a 128TB drive, or close to a week. With SP Random, the same task can be completed in just 6.5 hours. For a 256TB high-capacity drive, it also cut a preconditioning process that took 250 hours down to the same amount of time, saving up to 97 percent or more compared with the existing approach.

The key to the sharp reduction lies in SP Random's precise calculation method. Instead of writing data multiple times, it pre-calculates how overprovisioning will be distributed and completes preconditioning with only a single physical write. It minimised the resources needed for performance stabilisation through a mathematical design that divides drive regions with overlap and adjusts distribution based on addresses.

SanDisk made the technology open source so the global storage industry can adopt it immediately. This is expected to provide practical benefits to hyperscale data centres and AI infrastructure operators that need to deploy large-scale SSDs quickly on site. Cutting more than 150 hours of lead time per drive directly translates into faster rollouts for cloud operators and lower operating costs.

AI workloads, in which thousands of drives operate in parallel, are particularly sensitive to slight performance fluctuations. In AI training and inference environments where consistent performance is critical, SP Random is expected to become a key technology for improving infrastructure efficiency. But consumer PCs often use a single drive, so the perceived impact from shorter preconditioning is not expected to be significant.

Ultimately, SanDisk's decision is seen as a move that offers a practical solution for companies managing vast storage arrays. With its mathematical design proving tangible time savings, it is expected to bring meaningful changes to the speed of AI infrastructure buildouts and overall operating efficiency across global data centres.