[DigitalToday reporter Jinju Hong] An open-source tool called Deepclaude has emerged that keeps the autonomous coding-agent experience of Claude Code while sharply lowering the cost of using models. Developers can keep the existing interface and workflow and switch only the underlying model to DeepSeek V4 Pro or OpenRouter, reducing cost burdens.

On May 11 (local time), online media outlet Gigazine reported that Deepclaude is designed to preserve Claude Code’s autonomous agent loop and command-line interface (CLI) usability while switching model calls from Anthropic to other backends. Its GitHub repository description also highlights as a core feature: "Same UX, 17x cheaper."

Claude Code is a coding agent that supports reading and editing files, running Bash, Git operations and a multi-step autonomous work loop. When a developer gives instructions in natural language, it modifies code, runs commands and continues the workflow. However, costs have been cited as a burden because making active use of it effectively requires an Anthropic plan priced around $200 a month.

Deepclaude reroutes only the model API calls to other backends in this structure. It can connect to DeepSeek V4 Pro, OpenRouter, Fireworks AI and Anthropic-compatible APIs. Developers can use cheaper models while keeping much of the existing Claude Code operating experience intact.

The supported functions go beyond simple chat. It can use most of Claude Code’s core development workflow, including file read, write and editing; Bash and PowerShell execution; Glob and Grep search; a multi-step autonomous loop; sub-agent execution; Git operations; and project initialization. The focus is not simply changing a chatbot model, but running the development agent loop itself on lower-cost models.

It is not a complete replacement. DeepSeek’s Anthropic-compatible endpoint does not support image input, and MCP server tools also do not operate in the compatibility layer. Prompt caching also uses DeepSeek’s automatic caching structure instead of Anthropic’s 'cache_control' method. That means the compatibility range is broad but not fully identical to the original environment.

Performance differences also vary by task. According to the Deepclaude description, for about 80 percent of everyday development tasks, DeepSeek V4 Pro shows a level close to Claude Opus. For some tasks that require complex reasoning, Claude Opus is still assessed as having the edge. A practical usage scenario presented is to handle general work with DeepSeek models and switch back to Anthropic models only for difficult problems.

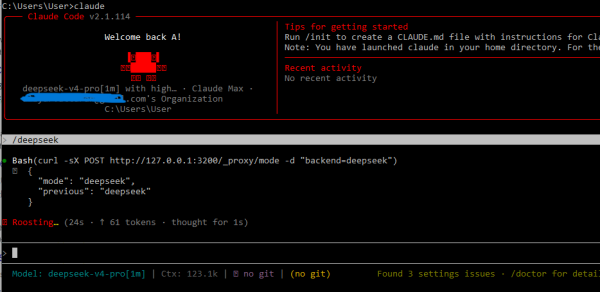

Deepclaude also provides a function to switch backends in real time during a session. A local proxy server runs at localhost:3200 and forwards Claude Code’s model calls to the currently selected backend. This allows users to move between DeepSeek, OpenRouter and Anthropic without restarting.

The cost difference is significant. DeepSeek V4 Pro is priced at $0.44 per 1 million input tokens and $0.87 per 1 million output tokens. By contrast, Anthropic’s Claude Opus 4.7 costs $5 per 1 million input tokens and $25 per 1 million output tokens. DeepSeek’s prices reflect a current discount promotion, but it was introduced as likely to maintain a relatively low-cost structure even after the discount ends.

In the industry, some assessments say Deepclaude shows a new direction in the coding-agent market. If AI services and development tools were previously tightly coupled, a method could spread in which only the model is swapped to fit the situation while keeping the same working environment. As developer demand grows to flexibly adjust cost and performance by task, Deepclaude is drawing attention as a case that symbolically shows this trend.