Nvidia is shifting the focus of its next-generation AI infrastructure strategy to cooperation among rack-scale systems. It is effectively declaring an end to the so-called GPU specification race centered on single devices so far. Through this, Nvidia is redefining itself from a chip seller into an infrastructure company that designs entire AI factories. As Nvidia, the world's biggest buyer and supplier of AI semiconductors, changes strategy, the investment equation across the entire supply chain is expected to change.

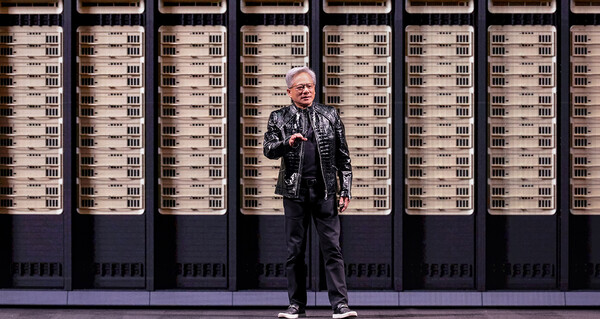

Nvidia is officially announcing five rack-scale systems based on a common MGX architecture at the GTC 2026 keynote in San Jose, California. Each system unveiled at a media briefing on the 16th forms a single AI supercomputer comprising Vera Rubin NVL72, Grok 3 LPX, a Vera CPU rack, BlueField4 STX storage and Spectrum6 SPX.

Nvidia defined this as a "platform that enables the largest infrastructure buildout in history". The five rack systems are integrated through the common MGX architecture, and each rack operates by sharing and easing different bottlenecks. For the seven Rubin-generation chips, the company said all have entered mass production.

The strategy reflects an intention to resolve context and decode bottlenecks in AI data centres at the system level, not at the chip level.

Nvidia physically combined LPU (language processing unit) technology secured through the Grok acquisition with its GPU rack. The LPX rack carries 256 LPUs and 128 gigabytes of SRAM, and connects to the Vera Rubin rack through a dedicated interconnect based on SpectrumX. The two racks work together to process all decode tokens of an AI model by layer. The GPU handles attention computation while the LPU takes the FFN (feed-forward network) layer.

Based on this, Nvidia said it achieves 500 tokens per second for a 1 trillion-parameter model and a service unit price of $45 per million tokens, lifting throughput 35-fold from the existing level. The LPX rack is expected to launch in the second half of this year at the same time as Vera Rubin.

Korean memory and materials and equipment suppliers may gain twice from HBM4 and AI factories.

The structural change Nvidia has declared also directly affects the memory market. Vera Rubin NVL72 currently carries 288 gigabytes of HBM4 per GPU, but LPX relies on 500 megabytes of SRAM and does not compete directly with HBM.

Instead, as the GPU rack's role concentrates on higher value-added attention computation, HBM4 packing density across the Rubin platform rises compared with the previous generation. This means Nvidia's trend of shifting generations by increasing HBM per GPU continues in the Rubin generation.

The industry views this GTC announcement as a qualitative turning point for AI infrastructure investment. If the rack-level integrated design strategy spreads, supply chain participants with system integration capabilities, rather than simple chip performance, are more likely to benefit.

For Korean memory makers, a direct opportunity opens up to expand HBM4 orders, and rising demand for power and equipment from AI factory expansion is expected to spill over to related materials and equipment companies, analysis shows. The breadth of gains across Nvidia's supply chain is expected to be wider than supplying GPUs alone.

TrendForce has analysed that demand for shifting to HBM3E and HBM4 will rise rapidly this year. SK Hynix is maintaining the top spot in HBM market share, while Samsung Electronics is preparing to ramp up HBM4 supplies.

Agentic AI is set to drive CPU demand beyond GPUs.

New sources of demand are opening beyond GPUs. Nvidia assessed that as agent-based AI workloads spread, CPU loads are rising sharply in tool-calling processes such as code compilation, SQL queries and Python execution. It explained that it incorporated its in-house designed CPU, Vera, into the Rubin platform to meet that demand.

Vera adopts a new core architecture, Olympus Core, optimised for AI execution. It delivers three times memory bandwidth per core, twice energy efficiency and 1.5 times single-thread performance compared with x86, Nvidia said. One Vera CPU rack includes 256 Vera CPUs, 400 terabytes of memory and 300 terabytes per second of memory bandwidth. Nvidia said it recorded a twofold performance improvement over the previous-generation Grace in Meta's OpenDCPerf benchmark.

Growing demand for agentic AI will change the computing configuration within AI factories. Nvidia is also set to announce the Rubin DSX platform, which can deploy 30 percent more AI computing resources in a fixed power environment. As demand to improve AI factory operational efficiency accelerates, structural growth pressure is expected to build in the market for power and cooling-related equipment. Nvidia said it is building the platform with more than 200 data infrastructure partners.