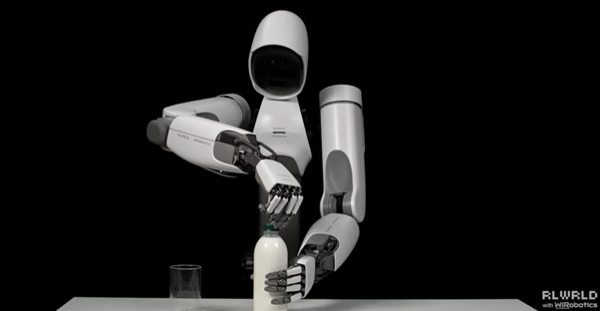

Physical AI company RLWRLD (RLWRLD) said on Monday it is upgrading training so its Allex robot and five-finger robotic hand can handle objects stably, based on the open humanoid robot AI model NVIDIA Isaac GR00T N1.5 released by Nvidia Research.

The company said the process of grasping and moving objects may look like a simple action, but in practice it requires a sequence completed at once: viewing the surroundings with cameras, understanding the situation, then finely adjusting fingers to move without mistakes. It said five-finger robotic hands have many finger joints, making movement complex, and small errors can immediately lead to failure. RLWRLD said it chose an approach to raise precision manipulation performance by optimizing and further training (fine-tuning) the pre-trained open model GR00T N1.5 to match its robots’ movements.

It also said it is very important to build a structure that enables rapid experiments and repeated iterations because it is difficult to achieve the desired performance with a single round of training.

RLWRLD Chief Technology Officer Jae-kyung Bae said RLWRLD conducted additional training optimized for its robots based on GR00T N1.5 and was able to sharply increase the speed of repeated experiments through rapid fine-tuning. He said such repeated training reinforces previously insufficient data and optimizes learning, advancing the robot hand to a level that can be used in real-world sites.

RLWRLD plans to release its own VLA (Vision-Language-Action) model early this year. The company said the model is designed with a large-scale architecture to enhance language and vision understanding performance, and through this it can further raise robotic hand manipulation precision and degrees of freedom in motion.