SK Hynix will unveil its HBM4 16-high 48GB product for the first time at CES 2026. SK Hynix said on Tuesday it will open a customer exhibition space at CES 2026 at the Venetian Expo in Las Vegas from Jan. 6 to 9 local time and showcase next-generation AI memory solutions. The company will operate only a customer exhibition space at this year’s CES, without a joint SK Group exhibition booth.

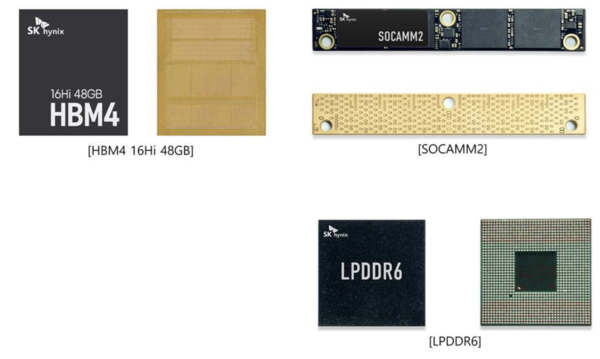

At the show, SK Hynix will debut its next-generation HBM product, HBM4 16-high 48GB, for the first time. The product follows the HBM4 12-high 36GB, which delivers an industry-leading speed of 11.7 Gbps.

It will also display its HBM3E 12-high 36GB product. The company plans to exhibit alongside it the latest AI server GPU module from a global customer that uses the product, to highlight its role within AI systems.

In addition to HBM, it will showcase a lineup of general-purpose memory products suited for AI implementation, including SOCAMM2, a low-power memory module specialized for AI servers. It will unveil LPDDR6, which improves data processing speed and power efficiency compared with existing products to enable on-device AI.

In NAND, it will present a 321-layer 2Tb QLC product suitable for ultra-high-capacity eSSD, where demand is surging as AI data center construction expands. The product delivers what the company described as the highest level of integration currently available and improves power efficiency and performance compared with the previous generation of QLC products.

The company also set up an "AI system demo zone" where visitors can see how its memory solution products for AI systems form an AI ecosystem and become connected.

It will exhibit and demonstrate customer-tailored cHBM (custom HBM) suited to specific AI chip or system requirements, AiMX accelerator cards for low-cost, high-efficiency generative AI based on PIM semiconductors, CuD (Compute-using-DRAM) that performs computation directly in memory, CMM-Ax that integrates compute functions into CXL memory, and data-aware CSD that recognizes, analyzes and processes data on its own.

For cHBM, it prepared a large exhibit that allows visitors to visually check the internal structure, reflecting strong customer interest. As competition in the AI market shifts from simple performance to inference efficiency and cost optimization, it visualized a new design approach that integrates some computing and control functions previously handled by GPU- or ASIC-based AI chips into HBM itself.

Kim Juseon, president and chief marketing officer of AI infrastructure at SK Hynix, said, "As innovation sparked by AI is accelerating further, customers’ technical requirements are also rapidly evolving." He added, "We will meet customer needs with differentiated memory solutions and, at the same time, create new value based on close collaboration with customers for the development of the AI ecosystem."