Anthropic has made an initial limited release of Claude Mythos, a new AI model, to only about 50 organisations because it is too good at finding and exploiting software vulnerabilities. The project is called Glasswing.

Anthropic said Mythos found thousands of vulnerabilities across all major operating systems and browsers, including a 27-year-old OpenBSD bug and a 16-year-old FFmpeg flaw. It also generated 181 pieces of attack code using a vulnerability it found in Firefox. That is a striking figure compared with Anthropic's previous flagship model, which produced only 2.

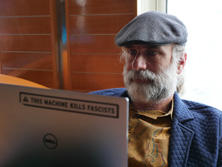

Bruce Schneier (브루스 슈나이어), a cryptographer and security expert, said in a recent post on his website that the approach is close to the responsible disclosure security researchers have long demanded, but there is too little information to assess Anthropic's decision. What has been released are impressive success stories, but it is not possible to know how often Mythos was wrong, he said.

"Anthropic says external security contractors agreed with 89 percent of the vulnerability severity ratings Mythos assigned," he said. "That is impressive but incomplete. Independent researchers studying similar models found that the better an AI is at spotting real bugs, the more it produces plausible wrong answers that make normal code that has already been fixed seem vulnerable. Without knowing how often Mythos was wrong, you cannot judge based on the 89 percent figure alone."

Schneier said it matters. "A model that precisely finds and exploits hundreds of vulnerabilities is a game changer, but a model that spews thousands of false positives still requires skilled humans," he said. "If you do not know Mythos' error rate, you cannot tell whether the cases Anthropic released represent the whole picture or were cherry-picked."

LLMs like Mythos work well when given inputs similar to the data they were trained on. In software terms, Mythos was trained heavily on open-source projects with publicly available code, major browsers, the Linux kernel and popular web frameworks.

Focusing early access on major vendors of such software is reasonable in that it allows them to patch before attackers. But that changes when moving into software domains outside the data most used for training.

"In industrial control systems, medical device firmware, bespoke financial infrastructure, regional bank software and old embedded systems, Mythos will have difficulty finding vulnerabilities," Schneier said. "An attacker with expertise in those areas could use Mythos' advanced reasoning capabilities as a weapon to target systems where Anthropic engineers do not have expertise. The risk is not that Mythos fails in that domain. It is that it succeeds in the hands of a specialised attacker."

Schneier stressed that expanding access to medical-device security specialist cardiologists, control-system engineers and researchers in less well-known languages and ecosystems would reduce such asymmetry. "No matter how well you choose, 50 companies cannot replace the expertise distributed across the broader research community," he said. "Anthropic is a private company. With limits in manpower, budget and expertise, it is unilaterally deciding which critical infrastructure to defend first, and it will miss things. If what it misses is hospital or power-grid software, the cost is paid by people who had no say."

Security risks from AI models are not limited to Mythos. "OpenAI also said it would not release GPT-5.3-Codex to the public because it is too dangerous, and the security firm Aisle reproduced many of the cases Anthropic released using smaller, cheaper open-source AI models," Schneier said.

Schneier said regulation is ultimately needed, but making regulation properly requires a long time and discussion. For now, he said, companies like Anthropic should share more information with a broader community.

"I am not saying powerful models like Mythos should be widely released," he said. "They should share as much data and information as possible so that informed collective decisions can be made. They should also support an international cooperation framework for independent audits, mandatory disclosure of aggregate performance metrics, and access for academic and civil-society researchers."