Upstage, the developer of Solar Open 100B, said claims that the model copied and fine-tuned a Chinese company’s large language model were not true. Solar Open 100B, with 100 billion parameters, was selected for a government project to build an independent foundation model.

Upstage CEO Sung Hoon Kim held an on-site briefing at the company’s Gangnam office on Jan. 2 for about 70 industry and government officials. He said the claim was false and called for an apology.

The event was also livestreamed on YouTube, drawing about 2,000 concurrent viewers. Kim disclosed key development data, including model training logs and checkpoints.

Kim said Solar Open 100B is a from-scratch model trained in-house from the beginning. He said it is allowed to reference model architecture ideas or inference code style even in from-scratch development, but using another model’s trained weights as they are would not be from scratch. In an LLM, weights are numbers that indicate how important information is.

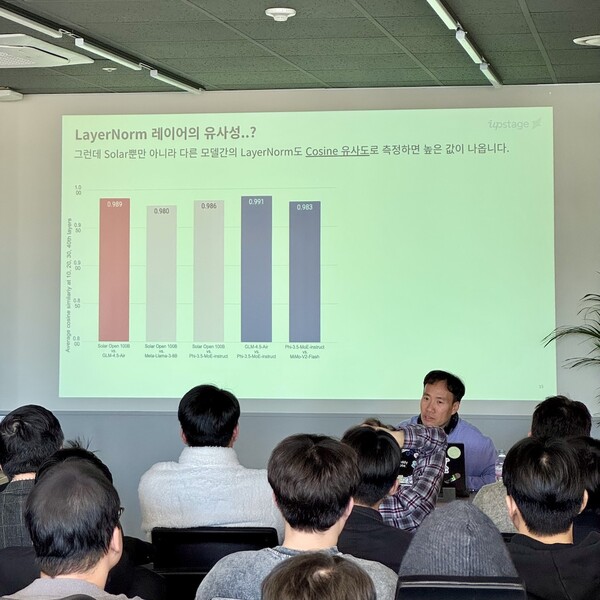

Kim said claims that the company reused another model’s weights based on LayerNorm similarity were nothing more than a statistical illusion.

LayerNorm refers to spreading data values evenly within a certain range so AI model training proceeds stably and quickly. Kim said the section raised as an issue accounts for only about 0.0004 percent of the entire model and instead shows that 99.9996 percent of Solar Open is completely different from other models.

He also said the cosine similarity used to judge LayerNorm similarity was not an appropriate comparison standard. He said cosine similarity is a simple metric that compares only vector direction, and that high similarity between independent models is a natural phenomenon because LayerNorm in language models typically shares similar structures and characteristics.

Kim also rejected claims that Solar Open used another model’s tokenizer as is. He said the other model had about 150,000 vocabulary items, while Solar Open has 196,000, and the actual shared vocabulary is only about 80,000, or 41 percent. He said tokenizers of the same family generally have more than 70 percent overlap, calling this quantitative evidence that Solar Open has a separate tokenizer built independently.

Kim said claims that the model’s structure and code are similar to a specific model do not match technical reality. He said major open-source LLM developers, including Upstage, do not disclose training code externally, and while it is possible to get ideas by referring to publicly available model cards and architecture descriptions, claims of developing a model by reusing inaccessible training code are not technically viable, which he said is a common industry view.

Kim also said allegations that the company took a specific model’s source code and manipulated the licence were not true. Upstage 공개ed inference code so more developers can easily try Solar Open, and in the process used parts of a Hugging Face 공개 open-source codebase to improve serving compatibility. He said this is commonly used under the Apache 2.0 licence that anyone can use, and that the company updated the wording to accurately cite the licence source.

The controversy began on Jan. 1 after Seok-hyun Ko, CEO of AI startup Psionic AI, raised suspicions on GitHub, a developer platform, that Solar Open 100B is a derivative model based on Zhipu AI’s GLM-4.5-Air.

The claim drew additional attention because first-stage evaluation results for five elite teams participating in the independent foundation model development project are set to be released in January. One company will be eliminated through the first evaluation, and Upstage moved to respond actively at the company-wide level.

Many in the AI community also reacted that Ko’s post was not persuasive in arguing that Solar Open 100B was derived from GLM-4.5-Air. Kevin Ko, a machine learning researcher at Kakao, posted that Solar-Open-100B was not derived from GLM-4.5-Air.

Kim said healthy debate and exchanges of opinions are welcome, but the act of delivering such false information in a definitive way seriously undermines the meaning of the efforts by Upstage and the government as they do their best toward becoming one of the AI top three. He said Upstage will continue to prove world-class technology based on transparent disclosure and work to expand South Korea’s AI ecosystem.