With the AI basic act now in full effect, watermarks have become mandatory on outputs made with generative AI. But social media platforms are openly sharing how to remove them, already raising questions about the measure’s effectiveness. Critics point to a regulatory gap at the distribution stage.

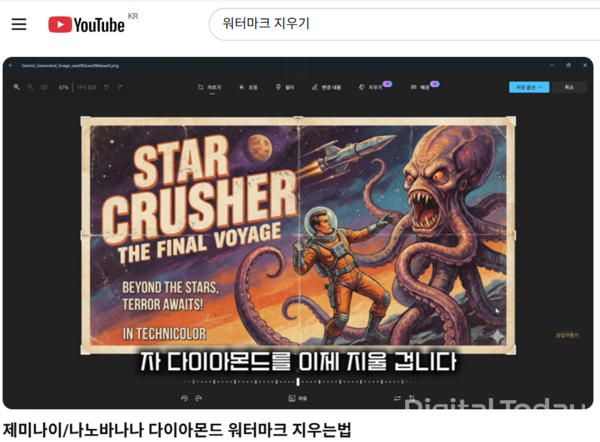

The industry said on Feb. 6 that content on social media platforms including YouTube has recently been spreading that shows how to remove AI watermarks, with titles such as “How to erase SORA watermarks” and “How to erase Nanobanana diamond marks”.

These videos explain how to remove AI-generated content labels by using built-in operating system tools such as Windows “Generative erase” or Mac “Cleanup”. Online communities such as Reddit are also recommending free and paid watermark removers including MagicEraser, Bylo.ai and Somake.

Watermarks are meant to distinguish AI-generated output from real content. Because the side effects expected without them are large, major governments and AI companies have responded since the early days of the spread of generative AI.

Former U.S. President Joe Biden issued an executive order in October 2023 on “Safe, Secure, and Trustworthy Development and Use of Artificial Intelligence”. The Commerce Department then prepared guidelines presenting “Content Credentials and provenance authentication (C2PA)” as a technical standard for identifying AI-generated content. Major generative AI companies such as OpenAI and Google also follow the guidelines.

The European Union brought its AI Act into force in August 2024, and transparency rules that include mandatory watermark displays will take effect from August this year.

South Korea also put the AI basic act into effect on Jan. 22, making it mandatory for AI companies to apply visible or invisible watermark displays to AI-generated output. Violations can be subject to administrative fines of up to 30 million won. Content that is difficult to distinguish from real material, such as deepfakes, must be marked in a way that people can clearly recognize.

However, rules are insufficient in the distribution process. Even if an AI company attaches a watermark, there is no legal basis to sanction the user or the platform if a user intentionally damages it and distributes the content on a platform.

Experts say they agree on the need for regulation but that the method should be approached carefully. Heon-young Kwon (권헌영), a professor at Korea University’s Graduate School of Information Security, said, “It should not stop at simply forcing watermark insertion, and legal effectiveness must be secured for acts that bypass or remove it.” He added, “Still, the targets and scope of sanctions must be approached carefully, and for now both technology and policy are in a deliberation process, so it needs to be watched over time.”

The National Assembly has moved to draft supplementary legislation. On Jan. 20, In-cheol Cho (조인철), a lawmaker from the Democratic Party and a member of the National Assembly’s Science, ICT, Broadcasting and Communications Committee, introduced a partial amendment to the Act on Promotion of Information and Communications Network Utilization and Information Protection, aiming to prevent the spread of illegal videos such as deepfakes by imposing marking obligations on “AI-generated content distributed through information and communications networks”.

The amendment includes measures to impose labeling obligations on posters, require platform operators to maintain and manage labels, ban users from arbitrarily removing or damaging labels on AI-generated content, and set grounds for punishment for violations. It aims to combine a company’s “labeling obligation” with a user’s “preservation obligation” to expand responsibility for managing content to the distribution stage.

The bill is currently under review at the committee in charge, the Science, ICT, Broadcasting and Communications Committee. It will then go through approval at a full committee meeting, a legislative and judicial review for system and wording, and a plenary vote.