Microsoft unveiled Rho-alpha, a physical AI robotics model based on its Phi series vision-language models. Microsoft aims to help physical systems adapt more flexibly through Rho-alpha.

The company said robots have performed well for decades in structured environments such as assembly lines, where tasks are predictable and strictly defined. With the emergence of vision-language-action (VLA) models for physical systems, it has become possible to support robots to perceive, reason and act autonomously with humans even in complex, undefined and less structured environments.

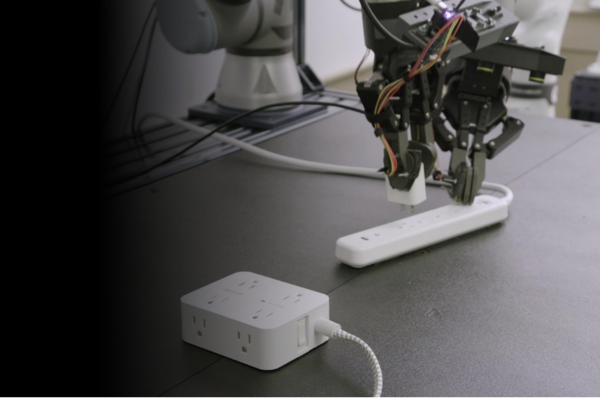

Rho-alpha converts natural-language commands into control signals so a robot can perform bimanual manipulation. The company said it is differentiated by expanding beyond the range of perception and learning modalities commonly used in existing VLA models.

Ashley Lawrence (애슐리 로렌스), vice president of Microsoft Research Accelerator, said, "On the perception side, we newly incorporated tactile sensing, and technical advances are under way to expand additional sensing modalities such as force." She added, "In the learning area, we designed it to continuously improve performance by learning from human feedback even while it is being deployed in real-world sites."

Microsoft is also running the Rho-alpha Research Early Access Program for partners that adopt Rho-alpha in their robot systems or identify various use cases.

Abhishek Gupta (아비섹 굽타), a professor at Washington University, said, "Remotely controlling robot systems to generate training data has now become an industry standard, but there are still many environments where teleoperation is impractical or impossible. In collaboration with Microsoft Research, we are generating various synthetic demonstrations data that combine simulation and reinforcement learning, and through this we are richly expanding pretraining datasets collected from real robots."