Grepp, which operates the AI-based online coding test platform Monito, said on Jan. 15 it has launched AI Assist, a tool that directly evaluates developers’ ability to collaborate with AI.

Grepp said it developed AI Assist to reflect demand in workplaces where AI tools such as ChatGPT and GitHub Copilot are widely used, and to narrow the gap between real development settings and hiring assessment environments.

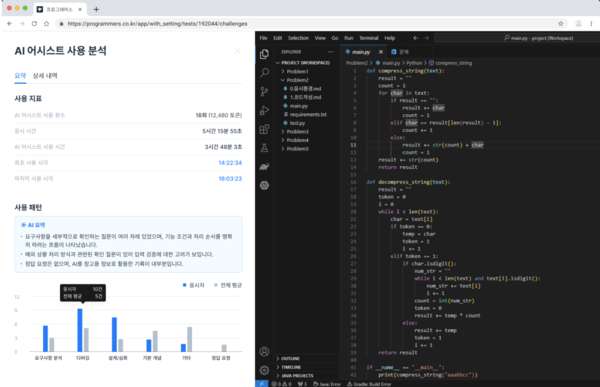

According to the company, AI Assist provides a standardised AI environment to directly measure test takers’ AI collaboration skills. Test takers can communicate with AI in real time, similar to an actual work environment, to generate code or fix errors. Hiring companies can review and analyse the entire problem-solving process, including the final submission, the quality of prompts entered and the decision-making process used to verify AI answers.

The company also stressed that, based on detailed data such as the number of questions asked, token usage and response wait times, it provides applicants’ AI usage capability in a state suitable for hiring.

Grepp CEO Seong-su Lim said, "Most developers work with AI in the workplace, but banning AI only in assessments is a contradiction that does not fit the times." He said, "Now, the technical judgement to verify and optimise the answers suggested by AI is the core competency of developers." He added, "Based on Grepp's AI supervision technology, we are creating an evaluation standard that properly measures developers' AI collaboration skills." He also said, "We will improve efficiency so that AI Assist becomes a standard for developer hiring by supporting the measurement of how well AI is used in development work."