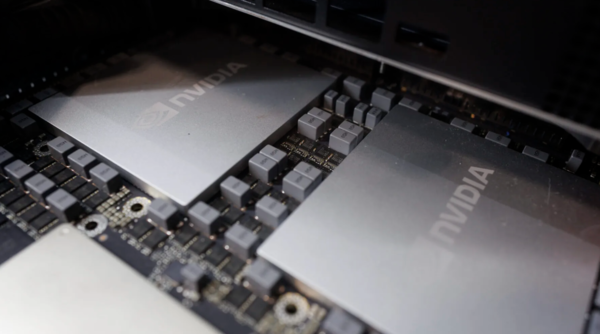

Market expectations are emerging that Nvidia will revert the memory in its next-generation AI inference accelerator, Rubin CPX, from GDDR7 back to high-bandwidth memory (HBM). If realised, HBM demand forecasts for SK Hynix and Samsung Electronics would need a sweeping revision, drawing the semiconductor industry’s attention.

Industry sources say even prefill tasks in actual mass-production environments require high memory bandwidth and capacity, and GDDR7 is being judged insufficient in performance efficiency. Rubin CPX is designed to handle prefill tasks, which use relatively less memory among AI inference workloads. It is a strategic product aimed at cutting inference costs to one-tenth of Blackwell by adopting GDDR7 instead of HBM. But as GDDR7 capacity limits and early supply instability are being interpreted as extending beyond the consumer graphics card market into data centres, there is speculation that Nvidia will ultimately switch to HBM.

This speculation is gaining traction against the backdrop of explosive growth in inference demand confirmed on Nvidia’s fourth-quarter earnings conference call. On the call held on Feb. 25 local time, Nvidia Chief Executive Jensen Huang (젠슨 황) said, "Compute equals revenues," adding that a shift to agentic AI is boosting token demand. Nvidia’s fourth-quarter data centre revenue was $62.0 billion, up 75 percent from a year earlier. On an annual basis, it recorded $194.0 billion, growing about 13-fold since the emergence of ChatGPT in 2023.

The issue is that expanding inference workloads are simultaneously raising requirements for memory capacity. Huang said agentic systems are generating multiple agents at the same time, sharply increasing token generation. Citing Anthropic’s Claude Cowork and OpenAI Codex, he also said, "We have reached useful intelligence," and that urgency to secure computing capacity has risen. Chief Financial Officer Colette Kress said, "Supply shortages of advanced architecture will persist."

◆Rubin roadmap to be unveiled at GTC; focus on where HBM supply outlook heads

The pace of growth in the inference market is steep, emerging as a factor that could influence the HBM supply outlook. Huang said Meta and Anthropic are deploying "millions of Blackwell and Rubin GPUs." Nvidia has decided to invest $10.0 billion in Anthropic. Kress said the 2026 capital expenditure outlook for the top 5 cloud companies has increased by about $120.0 billion from the start of the year to nearly $700.0 billion.

This environment underpins the "Rubin CPX HBM U-turn" theory. Micron recently said GDDR7 capacity could become a performance bottleneck, and concerns have also surfaced that early GDDR7 supply problems could delay the release of Nvidia’s consumer graphics cards, including the RTX 50 SUPER series. As GDDR7 supply instability that began in the consumer market has been interpreted as extending to data centres, the U-turn theory has gained strength.

Technically, however, switching from GDDR7 to HBM does not end with a simple component replacement. HBM requires through-silicon via (TSV) technology and 2.5D packaging (CoWoS), while Rubin CPX is designed to exclude these and use a standard PCB substrate to lower costs. Changing the memory would therefore effectively mean a chip redesign.

The key is whether Rubin CPX’s GDDR7 strategy can handle memory demand in large-scale inference environments. The U-turn theory itself is likely to remain market speculation, but it is unclear whether agentic AI performance can be supported going forward even with 128GB GDDR7. Nvidia is set to unveil a detailed roadmap for the Rubin platform in a GTC keynote in San Jose on March 16. The direction of the HBM supply outlook is also expected to take shape then.